NCEDC Tutorial#

Noisepy is a python software package to process ambient seismic noise cross correlations. This tutorial aims to introduce the use of noisepy for a toy problem on the NCEDC data. It can be ran locally or on the cloud.

The data is stored on AWS S3 as the NCEDC Data Set: https://ncedc.org/db/cloud/getstarted-pds.html

First, we install the noisepy-seis package

# Uncomment and run this line if the environment doesn't have noisepy already installed:

# ! pip install noisepy-seis

Warning: NoisePy uses obspy as a core Python module to manipulate seismic data. If you use Google Colab, restart the runtime now for proper installation of obspy on Colab.

Import necessary modules#

Then we import the basic modules

%load_ext autoreload

%autoreload 2

import os

from datetime import datetime, timezone

from datetimerange import DateTimeRange

from noisepy.seis import cross_correlate, stack_cross_correlations, __version__ # noisepy core functions

from noisepy.seis.io.asdfstore import ASDFCCStore, ASDFStackStore # Object to store ASDF data within noisepy

from noisepy.seis.io.s3store import NCEDCS3DataStore # Object to query SCEDC data from on S3

from noisepy.seis.io.channel_filter_store import channel_filter

from noisepy.seis.io.datatypes import CCMethod, ConfigParameters, FreqNorm, RmResp, StackMethod, TimeNorm

from noisepy.seis.io.channelcatalog import XMLStationChannelCatalog # Required stationXML handling object

from noisepy.seis.io.plotting_modules import plot_all_moveout

print(f"Using NoisePy version {__version__}")

path = "./ncedc_data"

os.makedirs(path, exist_ok=True)

cc_data_path = os.path.join(path, "CCF")

stack_data_path = os.path.join(path, "STACK")

S3_STORAGE_OPTIONS = {"s3": {"anon": True}}

/opt/hostedtoolcache/Python/3.10.20/x64/lib/python3.10/site-packages/noisepy/seis/io/utils.py:13: TqdmExperimentalWarning: Using `tqdm.autonotebook.tqdm` in notebook mode. Use `tqdm.tqdm` instead to force console mode (e.g. in jupyter console)

from tqdm.autonotebook import tqdm

Using NoisePy version 0.1.dev1

We will work with a single day worth of data on NCEDC. The continuous data is organized with a single day and channel per miniseed. For this example, you can choose any year since 1993. We will just cross correlate a single day.

# NCEDC S3 bucket common URL characters for that day.

S3_DATA = "s3://ncedc-pds/continuous_waveforms/NC/"

# timeframe for analysis

start = datetime(2012, 1, 1, tzinfo=timezone.utc)

end = datetime(2012, 1, 3, tzinfo=timezone.utc)

timerange = DateTimeRange(start, end)

print(timerange)

2012-01-01T00:00:00+0000 - 2012-01-03T00:00:00+0000

The station information, including the instrumental response, is stored as stationXML in the following bucket

S3_STATION_XML = "s3://ncedc-pds/FDSNstationXML/NC/" # S3 storage of stationXML

Ambient Noise Project Configuration#

We prepare the configuration of the workflow by declaring and storing parameters into the ConfigParameters() object and/or editing the config.yml file.

# Initialize ambient noise workflow configuration

# default config parameters which can be customized

config = ConfigParameters()

config.start_date = start

config.end_date = end

config.acorr_only = False # only perform auto-correlation or not

config.xcorr_only = True # only perform cross-correlation or not

config.inc_hours = 24 # INC_HOURS is used in hours (integer) as the

#chunk of time that the paralelliztion will work.

# data will be loaded in memory, so reduce memory with smaller

# inc_hours if there are over 400+ stations.

# At regional scale for NCEDC, we can afford 20Hz data and inc_hour

# being a day of data.

# pre-processing parameters

config.sampling_rate = 20 # (int) Sampling rate in Hz of desired processing (it can be different than the data sampling rate)

config.cc_len = 3600 # (float) basic unit of data length for fft (sec)

config.step = 1800.0 # (float) overlapping between each cc_len (sec)

config.ncomp = 3 # 1 or 3 component data (needed to decide whether do rotation)

config.stationxml = False # station.XML file used to remove instrument response for SAC/miniseed data

# If True, the stationXML file is assumed to be provided.

config.rm_resp = RmResp.INV # select 'no' to not remove response and use 'inv' if you use the stationXML,'spectrum',

############## NOISE PRE-PROCESSING ##################

config.freqmin, config.freqmax = 0.05, 2.0 # broad band filtering of the data before cross correlation

config.max_over_std = 10 # threshold to remove window of bad signals: set it to 10*9 if prefer not to remove them

################### SPECTRAL NORMALIZATION ############

config.freq_norm = FreqNorm.RMA # choose between "rma" for a soft whitening or "no" for no whitening. Pure whitening is not implemented correctly at this point.

config.smoothspect_N = 10 # moving window length to smooth spectrum amplitude (points)

# here, choose smoothspect_N for the case of a strict whitening (e.g., phase_only)

#################### TEMPORAL NORMALIZATION ##########

config.time_norm = TimeNorm.ONE_BIT # 'no' for no normalization, or 'rma', 'one_bit' for normalization in time domain,

config.smooth_N = 10 # moving window length for time domain normalization if selected (points)

############ cross correlation ##############

config.cc_method = CCMethod.XCORR # 'xcorr' for pure cross correlation OR 'deconv' for deconvolution;

# FOR "COHERENCY" PLEASE set freq_norm to "rma", time_norm to "no" and cc_method to "xcorr"

# OUTPUTS:

config.substack = True # True = smaller stacks within the time chunk. False: it will stack over inc_hours

config.substack_windows = 1 # how long to stack over (for monitoring purpose)

# if substack=True, substack_windows=2, then you pre-stack every 2 correlation windows.

# for instance: substack=True, substack_windows=1 means that you keep ALL of the correlations

# if substack=False, the cross correlation will be stacked over the inc_hour window

### For monitoring applications ####

## we recommend substacking ever 2-4 cross correlations and storing the substacks

# e.g.

# config.substack = True

# config.substack_windows = 4

config.maxlag= 200 # lags of cross-correlation to save (sec)

# For this tutorial make sure the previous run is empty

os.system(f"rm -rf {cc_data_path}")

os.system(f"rm -rf {stack_data_path}")

0

Step 1: Cross-correlation#

In this instance, we read directly the data from the ncedc bucket into the cross correlation code. We are not attempting to recreate a data store. Therefore we go straight to step 1 of the cross correlations.

We first declare the data and cross correlation stores

config.networks = ["NC"]

config.stations = ["KCT", "KRP", "KHMB"]

config.channels = ["HH?"]

catalog = XMLStationChannelCatalog(S3_STATION_XML, path_format="{network}.{station}.xml",

storage_options=S3_STORAGE_OPTIONS)

raw_store = NCEDCS3DataStore(S3_DATA, catalog,

channel_filter(config.networks, config.stations, config.channels),

timerange, storage_options=S3_STORAGE_OPTIONS) # Store for reading raw data from S3 bucket

cc_store = ASDFCCStore(cc_data_path) # Store for writing CC data

get the time range of the data in the data store inventory

span = raw_store.get_timespans()

print(span)

[2012-01-01T00:00:00+0000 - 2012-01-02T00:00:00+0000, 2012-01-02T00:00:00+0000 - 2012-01-03T00:00:00+0000]

Get the channels available during a given time spane

channel_list=raw_store.get_channels(span[0])

print(channel_list)

2026-05-01 23:03:02 | INFO | channelcatalog._get_inventory_from_file() | Reading StationXML file s3://ncedc-pds/FDSNstationXML/NC/NC.KRP.xml

2026-05-01 23:03:02 | INFO | channelcatalog._get_inventory_from_file() | Reading StationXML file s3://ncedc-pds/FDSNstationXML/NC/NC.KCT.xml

2026-05-01 23:03:02 | INFO | channelcatalog._get_inventory_from_file() | Reading StationXML file s3://ncedc-pds/FDSNstationXML/NC/NC.KHMB.xml

2026-05-01 23:03:03 | INFO | s3store.get_channels() | Getting 9 channels for 2012-01-01T00:00:00+0000 - 2012-01-02T00:00:00+0000

[NC.KCT.HHE, NC.KCT.HHN, NC.KCT.HHZ, NC.KHMB.HHE, NC.KHMB.HHN, NC.KHMB.HHZ, NC.KRP.HHE, NC.KRP.HHN, NC.KRP.HHZ]

Perform the cross correlation#

The data will be pulled from NCEDC, cross correlated, and stored locally if this notebook is ran locally.

If you are re-calculating, we recommend to clear the old cc_store.

cross_correlate(raw_store, config, cc_store)

2026-05-01 23:03:03 | INFO | correlate.cross_correlate() | Starting cross-correlation with 4 cores

2026-05-01 23:03:03 | INFO | s3store.get_channels() | Getting 9 channels for 2012-01-01T00:00:00+0000 - 2012-01-02T00:00:00+0000

2026-05-01 23:03:03 | INFO | correlate.cc_timespan() | Checking for stations already done: 6 pairs

2026-05-01 23:03:03 | INFO | correlate.cc_timespan() | Still need to process: 3/3 stations, 9/9 channels, 6/6 pairs for 2012-01-01T00:00:00+0000 - 2012-01-02T00:00:00+0000

2026-05-01 23:03:31 | INFO | correlate.cc_timespan() | Starting CC with 6 station pairs

2026-05-01 23:03:33 | INFO | s3store.get_channels() | Getting 9 channels for 2012-01-02T00:00:00+0000 - 2012-01-03T00:00:00+0000

2026-05-01 23:03:33 | INFO | correlate.cc_timespan() | Checking for stations already done: 6 pairs

2026-05-01 23:03:33 | INFO | correlate.cc_timespan() | Still need to process: 3/3 stations, 9/9 channels, 6/6 pairs for 2012-01-02T00:00:00+0000 - 2012-01-03T00:00:00+0000

2026-05-01 23:04:00 | INFO | correlate.cc_timespan() | Starting CC with 6 station pairs

The cross correlations are saved as a single file for each channel pair and each increment of inc_hours. We now will stack all the cross correlations over all time chunk and look at all station pairs results.

Step 2: Stack the cross correlation#

We now create the stack stores. Because this tutorial runs locally, we will use an ASDF stack store to output the data. ASDF is a data container in HDF5 that is used in full waveform modeling and inversion. H5 behaves well locally.

Each station pair will have 1 H5 file with all components of the cross correlations. While this produces many H5 files, it has come down to the noisepy team’s favorite option:

the thread-safe installation of HDF5 is not trivial

the choice of grouping station pairs within a single file is not flexible to a broad audience of users.

# open a new cc store in read-only mode since we will be doing parallel access for stacking

cc_store = ASDFCCStore(cc_data_path, mode="r")

stack_store = ASDFStackStore(stack_data_path)

Configure the stacking#

There are various methods for optimal stacking. We refern to Yang et al (2022) for a discussion and comparison of the methods:

Yang X, Bryan J, Okubo K, Jiang C, Clements T, Denolle MA. Optimal stacking of noise cross-correlation functions. Geophysical Journal International. 2023 Mar;232(3):1600-18. https://doi.org/10.1093/gji/ggac410

Users have the choice to implement linear, phase weighted stacks pws (Schimmel et al, 1997), robust stacking (Yang et al, 2022), acf autocovariance filter (Nakata et al, 2019), nroot stacking. Users may choose the stacking method of their choice by entering the strings in config.stack_method.

If chosen all, the current code only ouputs linear, pws, robust since nroot is less common and acf is computationally expensive.

config.stack_method = StackMethod.LINEAR

method_list = [method for method in dir(StackMethod) if not method.startswith("__")]

print(method_list)

['ALL', 'AUTO_COVARIANCE', 'LINEAR', 'NROOT', 'PWS', 'ROBUST', 'SELECTIVE']

cc_store.get_station_pairs()

config.stations = ["*"]

config.networks = ["*"]

stack_cross_correlations(cc_store, stack_store, config)

2026-05-01 23:04:02 | INFO | stack.initializer() | Station pairs: 6

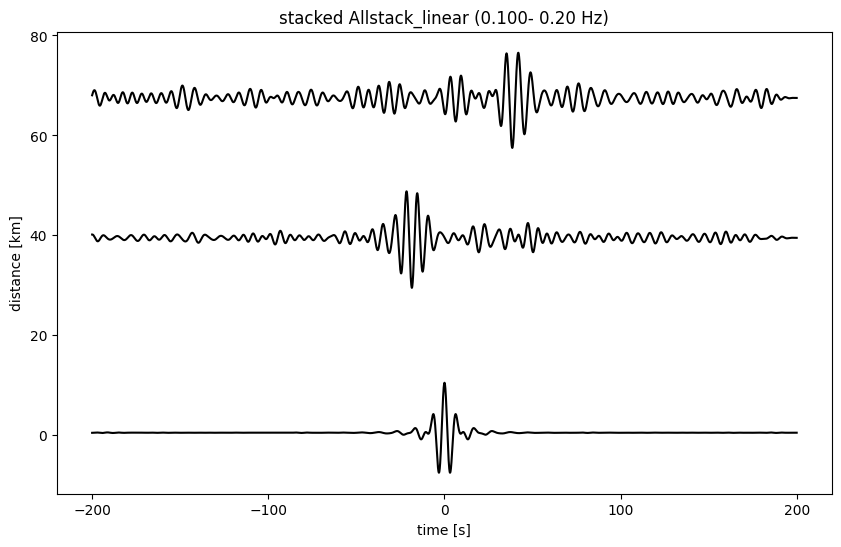

QC_1 of the cross correlations for Imaging#

We now explore the quality of the cross correlations. We plot the moveout of the cross correlations, filtered in some frequency band.

cc_store.get_station_pairs()

[(NC.KHMB, NC.KRP),

(NC.KCT, NC.KRP),

(NC.KCT, NC.KCT),

(NC.KCT, NC.KHMB),

(NC.KHMB, NC.KHMB),

(NC.KRP, NC.KRP)]

pairs = stack_store.get_station_pairs()

print(f"Found {len(pairs)} station pairs")

sta_stacks = stack_store.read_bulk(timerange, pairs) # no timestamp used in ASDFStackStore

Found 6 station pairs

plot_all_moveout(sta_stacks, 'Allstack_linear', 0.1, 0.2, 'ZZ', 1)

2026-05-01 23:04:06 | INFO | plotting_modules.plot_all_moveout() | Plotting 6 pairs from Allstack_linear

200 8001